Creepy, the geolocation information aggregator

What is creepy?

So what is Creepy actually and how does it come into the “Geolocation” picture ? Creepy is a geolocation information aggregation tool. It allows users to gather already published and made publicly available geolocation information from a number of social networking platforms and image hosting services. In its current, beta form, it caters to harvesting geolocation information from twitter in the form of geotagged tweets, foursquare check-in's, geotagged photos posted on twitter via image hosting services such as yfrog, twitpic, img.ly, plixi and others, and Flickr. The geolocation information that Creepy retrieves is presented to the user in the form of points on a navigable embedded map along with a list of locations (geographic latitude and longitude accompanied with the date and time). Each location is associated with contextual information, such as the text that the user tweeted from that specific location, or the photo he or she uploaded. Let's do a walk-through on how to use Creepy before going into more detail on how and why it actually works.

Introduction — terminology

Terms and definitions can be boring, but I think it is important to start with some terminology, which will help lay out the basis for the rest of the discussion. So, I guess most of us have heard the buzz words, such as geolocation, geotagging, EXIF, location aware services etc. Let's try to define some of them

Cybersecurity interview guide

Cybersecurity interview guide

Geolocation: Geolocation can be defined as the identification of the real-world geographic location of an entity. This entity can be an object (a vehicle or a building), a device such as a smart-phone or even a person. Also the term is used rather interchangeably to describe both the action of assessing the location information and the assessed location information itself.

Geotagging: Geotagging is the process of adding geographical identification information in digital media, such as video, photographs etc. This can be done automatically by the device capturing the photograph (smart-phone with embedded GPS receiver or digital cameras with attached external GPS receiver) or by manually editing the meta-data information associated with the photograph primarily using the EXIF standard.

EXIF: EXIF (Exchangeable Image File Format) is a standard that specifies the formats for images, audio and ancillary tags used by a number of devices (digital cameras, smart-phones) and other systems handling image and sound files. Geolocation information, such as GPS retrieved latitude, longitude, speed, etc can be stored and retrieved to and from digital images The geolocation related tags are specified in table 12 in http://www.exif.org/Exif2-2.PDF the 2.2 version of the specification.

Locational Privacy: According to EFF http://www.eff.org/wp/locational-privacy, “Locational privacy (also known as "location privacy") is the ability of an individual to move in public space with the expectation that under normal circumstances their location will not be systematically and secretly recorded for later use”.

Privacy concerns regarding the massive amounts of information shared on social networking platforms is by far not a new issue. Since the initial blossom of social networks, such as twitter, facebook and foursquare, security experts and privacy advocates have tried hard to point out the potential risks of this sharing paradigm on our privacy.

Installation

Creepy is written in python (hence the .py in cree.py), it's source code is available on Github and it comes also as packaged binary for debian based linux distributions (Debian, Ubuntu, Backtrack) and MS Windows (XP and later).

Linux: On Ubuntu / Backtrack, users need to add the repository in their sources list (/etc/apt/sources.list).

Ubuntu 11.04 : ~$sudo add-apt-repository ppa:jkakavas/creepyUbuntu 10.10 : ~$sudo add-apt-repository ppa:jkakavas/creepy

Ubuntu 10.04 : ~$sudo add-apt-repository ppa:jkakavas/creepy-lucid

Backtrack 5 :

Creepy will soon be in the official repositories of Backtrack 5 until then, you can use the repository for ubuntu lucid

~# add-apt-repository ppa:jkakavas/creepy-lucid

Backtrack 4 : ~# echo 'deb http://people.dsv.su.se/~kakavas/creepy/binary/' >> /etc/apt/sources/list

Then, update with

~$sudo apt-get update

and install with

~$sudo apt-get install creepyOn Ubuntu, creepy will be located under Applications → Internet → creepy and on Backtrack under Backtrack → Information gathering → creepy.

On systems running MS windows, the installer can be downloaded from Github https://github.com/ilektrojohn/creepy/downloads.

Use - features

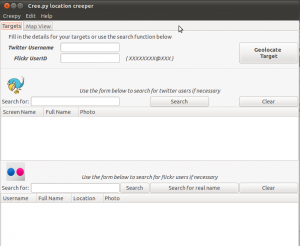

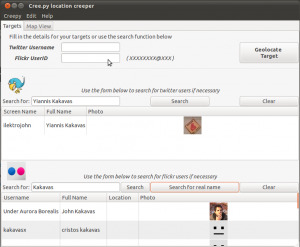

Creepy's main functionality is divided in two tabs in the main interface. The “targets” tab and the “mapview” tab. Starting Creepy will bring you to the target interface

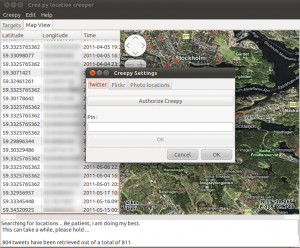

Before we can start using it, we should authorize Creepy to use our twitter account in order to access the twitter API. This process is necessary if we need to use the search function, to search for twitter users within Creepy, and to access twitter users we follow that have non public (protected) timelines. This can be done by navigating to Edit → Settings

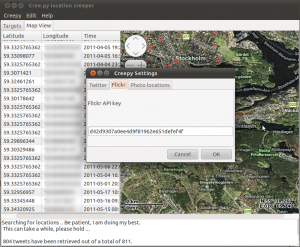

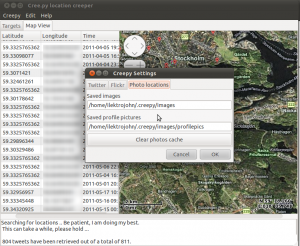

Clicking on “Authorize Creepy” the web browser will be invoked and pointed to twitter where providing the user credentials for our account, we will receive a PIN number. Inserting this PIN number back in Creepy and clicking “OK” the authorization process is completed. The authentication and authorization process makes use of oAuth protocol and user credentials are not shared with the application itself. The authorization can be revoked at any time by visiting the account settings on www.twitter.com. The next two tabs in settings can be used to specify a key for accessing Flickr API should this be necessary (Creepy comes with a predefined API key, there is no actual need to change this unless it is not working), and to specify the directory in our local disk where photos should be downloaded in order to be analyzed for geolocation related EXIF tags.

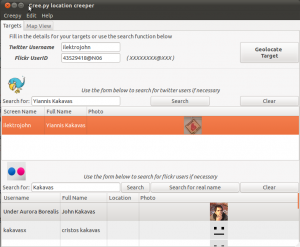

Now that we have authorized Creepy to use our twitter account and edited the settings to our preference, we are ready to use the application. If we do not know the twitter handle or the Flickr ID of the user we want to “target”, we can use the inbuilt search functions for the two services.

For twitter, we can search by username, full name and email. For Flickr we can search for full names or usernames, by selecting the respective search button. The search results include the username, the full name, a profile picture for the user and some additional information such as declared location, if applicable.

Double clicking on the correct result will populate the text fields on the top. Alternatively, we can provide the twitter username or Flickr ID manually in case we know that beforehand.

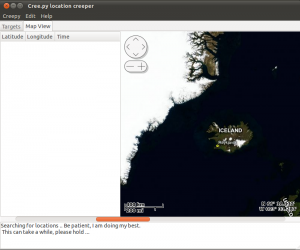

Once we are satisfied with our search queries, we hit “Geolocate Target”

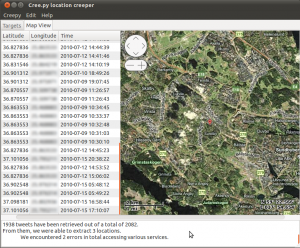

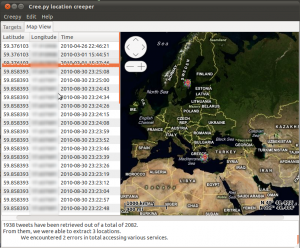

The geolocation information retrieval can be a little lengthy process depending on the amount of tweets that the “target” has, how many of those tweets include a photo that needs to be downloaded and analyzed. It can take from 1-2 minutes, up to a quarter so please be patient. Once the retrieval and analysis is over, we are presented with the results in something that will look quite similar to the following:

On the left side we can see a list with all the retrieved locations in the form of latitude, longitude, timestamp triples and on the right side the embedded map with all the locations, focused initially to the most recent location retrieved. On the bottom side of the user interface, there is a text field that includes useful information about the retrieval process, such as how many tweets were analyzed, and information about possible errors that Creepy encountered while trying to access targeted services.

From here, we can navigate the map using the embedded controls or just by simply dragging the map around and have a detailed overview of specific geographic areas or zoom out and have a global overview of retrieved locations in wider areas

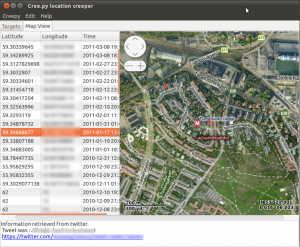

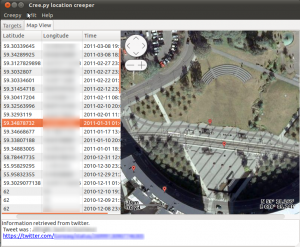

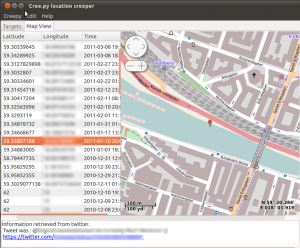

Alternatively, we can navigate through the results by using the location list on the left. Double clicking on any location from the list, will focus the map on that specific geographic location and will also present relative contextual information in the text area underneath the map.

As we can see, the geolocation information was retrieved from twitter, and we can also see what the “target” tweeted from that specific location along with a link to the actual tweet on twitter's website. (Information is blurred out due to privacy concerns). Right-clicking on locations from the list, we have the opportunity to either copy the latitude-longitude tuple on the system's clipboard or to open the location in our browser using google maps. This can be very handy in cases where using Google's street view we can get a real-world, however probably not real-time impression of the target's location.

We can also select the map provider and the associated view from a list that includes Google (satellite, street, hybrid views), Virtual Earth Maps (satellite, street, hybrid views) and openstreetmaps among others.

All the geolocation information that is retrieved for the respective “target” is being cached internally by Creepy. That means that the next time we will need to search for a specific target, only the newest tweets will be retrieved and analyzed and all former known locations will be loaded from cache. This way, we limit calls to twitter and Flickr API in order to minimize the possibility of hitting the API's limits rendering the application unusable for a period of time, we minimize network usage and we ensure information persistence in case the “target” decides to erase some or in case of services being temporarily unavailable. Moreover, all errors are cached. As an example, if for some reason Creepy was not able to download and analyze a number of photographs from some image hosting service, it “remembers” those specific images and will try to access them the next time we will search for the specific “target”.

Apart from this internal caching, we have the option to export our results in the form of comma separated values (.cvs) file that can be imported to any other application. The export function (Creepy → Export as) can also export the location list as a kml file to be used with Google Earth software for further analysis.

Internals

Overview

Let's now take a brief look inside Creepy. How it works, which python libraries it uses etc. Creepy is an open source (licensed under GPLv3) python application. It is organized in six classes.

- CreepyUI class takes care of building the GUI and the functions related to that.

- Cree class holds the main control of the functionality. It's methods are invoked by GUI events and in turn calling methods from the rest of the classes which actually implement the needed functions.

- Twitter and Flickr classes hold the respective functionality regarding those services.

- URLAnalyzer holds the functionality for analyzing links in tweets, determining whether they are links of one of the supported image hosting services, downloading the embedded photos and analyzing them for geolocation tags in EXIF meta-data.

- Helper class holds some functions for additional features.

Used Libraries

The user interface is written with the use of Gtk+ toolkit via pygtk. Interaction with twitter API is achieved with the use of tweepy (https://github.com/tweepy/tweepy), while Flickr API is accessed via Flickrapi (http://stuvel.eu/flickrapi). All the map-related functionality could not be possible without the python bindings to Gtk+ that osmgpsmap (http://nzjrs.github.com/osm-gps-map/) offers. Finally, Exif meta-data are retrieved from photos with the use of pyexiv2 (http://tilloy.net/dev/pyexiv2/), a python binding to exiv, a C++ library for EXIF manipulation.

The source code is hosted in github (https://github.com/ilektrojohn/creepy) if you feel the need to look through it in detail and get accustomed with the internal functionality.

What can Creepy do for you ?

What is the rationale behind Creepy, one might think, and in which cases can it be of assistance as a tool ? Creepy has a twofold goal:

Educational Tool

First goal is to raise awareness among the users of social networking platforms. Awareness regarding the amount of information they actually do share, and awareness regarding the potential misuse of this public information by people with not so benign intentions. It is a showcase of the potential risks of excessively sharing private information in a public manner. Creepy allows users to check and visualize their online presence and realize the magnitude of information they unwittingly put out there. Hopefully this visualization will make them revisit their online conduct, deleting information they have already posted, deciding to share less or at least fine-graining who has access to it.

Information Gathering

The second goal was to create a handy tool for security professionals. Creepy can be really helpful in the early process of information gathering during a penetration test. Individually, those small bits of information might not say enough themselves, but the possibility to combine them in an aggregated result enables or/and facilitates a behavioral analysis of the “targeted” individual. For example, the information that X was in a coffee-shop at 14.32 on May 23 doesn't say anything in itself. The fact that X might be in that coffee-shop around 14.30 every Monday gives away much more information about his habits. Also, Y posting on twitter at random times from a specific location might not reveal enough. However, if analyzing the locational timeline in Creepy we see that all tweets from that specific location are posted between 8.00 and 16.00, and only in working days, there is a very good possibility that Y's working place is at this location. All this information can be really of help in the process of social engineering during a penetration test. These habits can be used for successful pretexting and gaining the trust of our “target”.

Cybersecurity interview guide

What can we do to protect our locational privacy ?

Well the answer to that is surprisingly simple. Put restraints to our need for exhibitionism. Education and caution. Treat the web for what it is: A public place where information, once released, will be persistent and out of our control. More practical advice:

Cybersecurity interview guide

- Disable geolocation services in smart-phones when they are not actually needed.

- Think before opting in for geolocation features in social networking platforms. Is it worth it ?

- Location aware (based) social networking platforms. Seriously? Can you spell “stalk me”?

- Twitter gives you the possibility to erase all geolocation information from your timeline. Use it.

- “Creepy” yourself. Visualize the digital locational footprint you have left online. Did you actually share more with the whole world than you thought you did ?

For more information, comments, discussion and rants, my blog is located at http://diveintoinfosec.wordpress.com and my twitter handle is @ilektrojohn.