How Criminals Can Exploit AI

Because tools for developing artificial intelligence (AI) sources and tutorials for its use are widely available in the public domain, it is expected that AIs for attacking purposes may become even more prevalent than those created for defensive ones.

"Hackers are just as sophisticated as the communities that develop the capability to defend themselves against hackers. They are using the same techniques, such as intelligent phishing, analyzing the behavior of potential targets to determine what type of attack to use, "smart malware" that knows when it is being watched so it can hide[,]" said the president and CEO of enterprise security company SAP NS2, Mark Testoni.

This article reviews some of the common attack vectors in which cybercriminals could apply the AI technology.

1. Disrupting Impact on Normal Measurements Produced by the Defensive AI System

Machine learning poisoning is a way for criminals to circumvent the effectiveness of AI. They study how the machine learning (ML) process works, which is different on a case-by-case basis, and once a vulnerability is spotted, they will try to confuse the underlying models.

To poison, the machine learning engine is not that difficult if you can poison the data pool from which the algorithm is learning. Dr. Alissa Johnson, CISO for Xerox and the former Deputy CIO for the White House, knows the simplest solution against such ML poisoning: "AI output can be trusted if the AI data source is trusted," she told SecurityWeek.

Learn Python for Pentesting

Convolutional Neural Networks (ConvNets or CNNs) are a class of artificial neural networks that have proven their effectiveness in areas such as image recognition and classification. Autonomous vehicles also utilize this technology to recognize and interpret street signs.

To work properly, however, CNNs require considerable training resources, and they tend to be trained in the cloud or partially outsources to third parties. That is where the problems may occur. While there, hackers may find a way to install a backdoor in the CNN so that it would perform as normal on all inputs except those small (and hidden) amounts of inputs chosen by hackers. One-way hackers could do that is by poisoning the training set with "backdoor" images created by the hackers. Another way is through a man-in-the-middle attack, which is a scenario possible when the attacker intercepts the data sent to the Cloud GPU service (i.e., the service that trains the CNN).

What works to their advantage is the fact that these types of cyber attacks are difficult to detect and thus evade the standard validation testing.

Other Techniques that Confuse AI

Attackers employ an invisible-to-the-naked-eye technique called "perturbation," which can be a misplaced pixel or a pattern of white noise that can convince a bot that some object on an image is something else. For example, there have been experiments where an AI was confused into thinking that a turtle was a rifle and a banana was a toaster.

The scientists at Google Brain introduced a new nuance in the concept of "adversial" images that would fool both humans and computers. Their algorithm slightly changed the face of a cat to visually still appear as a cat, but it is, in fact, a dog's face.

2. Chatbot-related Cybercrimes

We are already living in a world brimming with chatbots: customer service bots, support bots, training bots, information bots, porn bots, you name it. It is a trend that probably exists because the majority of people would prefer to text than talk over the phone – 65%, according to Kaspersky. That form of lifestyle, however, has its dangers.

Chatbots are cash cows as far as cybercrime is concerned because of their ability to analyze, learn, and mimic people's behavior. As Martin Ford, futurist and author of 'Rise of the Robots', mentioned in this regard:

"There is a concern that criminals are in some cases ahead of the game and routinely utilize bots and automated attacks. These will rapidly get more sophisticated."

Usually, chatbots are programmed to start and then sustain a conversation with users with the intention to sway them to reveal personal, account or financial information, open links or attachments, or subscribe to a service (Do you remember Ashley Madison's angels?). In 2016, a bot presenting itself as a friend tricked 10,000 Facebook users into installing malware. After being compromised, the threat actor hijacked the victims' Facebook account.

AI-enabled botnets could potentially exhaust human resources via online portals and phone support (See the DDoS part). That is correct – canny bots may interact with humans with the sole purpose of obstructing their interactions with other humans.

Mobile bot farms are the places where bots are refashioned to appear more human-like to make it more difficult to differentiate between humans and bots. For instance, Google's Allo message app learns how to imitate the people it is communicating with. That may bode ill for you– a bot who knows how to imitate you and who has your credentials obtained through some other avenue could be the perfect online impostor.

Most people probably do not realize how much personal information AI-driven conversational bots, such as Google Assistant and Amazon's Alexa, may know about them. Due to their nature to always be in "Listen Mode," in combination with the ubiquity of the internet-of-things (IoT) technology, private conversations within the living quarters are most likely not as private as they might seem.

In addition, regular commercial chatbots often come unequipped for secure data transmissions — they do not support HTTPS protocols or Transport Level Authentication (TLA) — which makes them easy prey for experienced cybercriminals.

3. Facilitate Ransomware

We all know what nuisance ransomware attacks could cause. We all know how widespread they are. What we do not know right now is that the situation may get much worse the moment cybercriminals decide to add a pinch of ML into the recipe.

By way of illustration, AI-based chatbots could automate ransomware by communicating with the victims to help them pay the ransom easier, and even make an in-detail assessment of the potential value of the encrypted data so that the ransom amount would fit the bill.

4. Developing Malware

The process of creating malware is predominantly manual. Cybercriminals need to write the code of computer viruses/Trojans, use rootkits, password scrapers, as well as any other tool that can help them disseminate and execute their malware.

Imagine if they have something that will speed up that process.

Learn Network Traffic Analysis for Incident Response

5. Impersonation Fraud and Identity Theft

AI is a powerful generator of synthetic images, text, and audio – a feature that could make it a favorite weapon in impersonation and propaganda campaigns.

Emerging trends of "deepfake" pornographic videos that began to surface online attest to this possibility.

6. Gathering Intelligence and Scanning for Vulnerabilities

AI scales down the enormous proportions of effort and time hackers must put into vulnerability intelligence, identifying targets, and spearphishing campaigns.

Until experts have the opportunity to conduct extensive studies on emerging ML technologies, it is questionable whether academics should reveal new developments in the field — a proposition also underpinned by a 98-page study on AI published in February 2018.

A startup has been using AI to deep scan the dark web for trails of its customers' personally identifiable information posted for sale on the black market. Would it not be possible for cybercriminals to do the same but to further their agenda?

7. Phishing and Whaling

"Machine learning can give you the effectiveness of spearphishing within bulk phishing campaigns; for example, by using ML to scan Twitter feeds or other content associated with the user to craft a targeted message," pointed out Intel Security's CTO, Steve Grobman for the SecurityWeek. Whereas spearphishing attacks can target any individual, whaling attacks target only specific, high-ranking individuals within a company.

Criminals could harness ML technology to sift through huge quantities of stolen records of individuals to create more targeted phishing emails, according to McAfee Labs' predictions for 2017. In a 2016 experiment, two data scientists from security firm ZeroFOX established that AI was substantially better than humans at devising and distributing phishing tweets, which also led to a much better conversion rate.

Recurrent neural learning studied timeline posts of targeted users and then tweeted phishing posts to lure them into clicking through. The success rate was between 30-60 % — much higher than manual/bulk spearphishing. John Seymour and Philip Tully demonstrated this attack method at Black Hat USA 2016, and it is also published in a paper titled "Weaponizing data science for social engineering: Automated E2E spear phishing on Twitter".

8. Continuous Attacks

'Raising the noise floor' occurs when an adversary bombards a targeted environment with data that consists of numerous false positives, which is presumably detected by ML defense mechanisms.

Eventually, the adversary forces upon the defender recalibration of his defense measures so that they will not become affected by all these false positives. The door is set ajar, and that is exactly when the attacker has the opportunity to sneak in.

9. Cyber Exploitation

"We've seen more and more attacks over the years take on morphing characteristics, making them harder to predict and defend against Now, leveraging more machine learning concepts, hackers can build malware that can learn about a target's network and change up its attack methodology on the fly," says Wenzler from AsTech Consulting.

Fully autonomous AI tools will act like undercover agents or lone warriors that operate behind enemy lines. Once sent on their mission, they have free will and forge their plan of action on their own. Typically, they blend in with the normal network activities, learn fast from their environments, and decide what course of action is the best while remaining buried deep inside enemy turf (i.e., digital networks).

offensive AI mechanisms that have continuous access to a targeted digital network weaken its immune system from within and create a surreptitious exploitation infrastructure (e.g., backdoors, importing malware, escalating privileges, exporting data, etc.).

An Internet-connected device, for instance, is one convenient entry point. Every object that can be connected to the internet is a potential point of compromise, even the most absurdly looking IoT products. For example, cybercriminals entered a network in a North American casino through a connected fish tank. Then, they scanned the surroundings, found more vulnerabilities, and eventually moved laterally further into the attacked network.

The first nuances of a real-world AI-powered cyberattack occurred in 2017 when the cybersecurity company Darktrace Inc. detected an intrusion in the network of an India-based firm. It displayed characteristics of rudimentary machine learning that observe and learn patterns of normal behavior while lurking inside the network. After a while, the malicious software began to mimic behavior to blend into the background … and voila, suddenly, these chameleon-like tricks make things foggy for security mechanisms in place. Just as a sophisticated flu virus could mutate and become resistant to antibiotics, AI could teach malware and ransomware to evade their respective antidotes.

Something like this case of a piece of malware that manipulates the environment was demonstrated at DEFCON 2017 where a data scientist from Endgame, a U.S. security vendor, showed how an automated tool could learn to mask a malicious file from virus prevention software with various code morphing techniques. The DARPA's Cyber Grand Challenge in 2016, which is the world's first all-machine cyber hacking tournament, provided us with first impressions of how AI-powered cyberattacks would look like.

10. Distributed Denial-of-Service (DDoS) Attacks

Successful malware strains usually have copycats or successors. That is the case with the new variant of the Mirai malware that took control of a myriad of IoT devices that utilize ARC-based processors.

On October 21, 2016, the DNS servers at Dyn Systems were struck down by a massive DDoS attack that led to outages that affected some major websites, including Twitter, Reddit, Netflix, and Spotify. According to Elon Musk, the founder and CEO of Tesla and Space X, next time an advanced AI may be to blame for such havoc, or worse. He noted that the Internet infrastructure is "particularly susceptible" to a "gradient descent algorithm," which is a mathematical process whose goal is to find the most optimal solution to a complex function. To translate that to the world of ML: the results will most likely be a lot more devastating than the Mirai DDoS campaign, as AI is becoming much quicker on the uptake and "finding the most optimal solution" to a complex process, such as organizing and launching a DDoS attack powered by Internet-enabled devices.

Not great news, especially in light of the Deloitte's warning that DDoS attacks are expected to reach one Tbit/sec soon, mainly because of the insecure IoT devices and the rise of AI that may optimize the effectiveness of such attacks.

Fortinet predicted that in 2018 we would witness intelligent clusters of hijacked devices called "hivenets" that will leverage self-learning and act without explicit instructions from the botnet herder. These features, in combination with the ability of the devices to communicate with each other to maximize their effectiveness at a local level, will allow these clusters to grow into swarms, making them an ideal weapon for a simultaneous, blitzkrieg-style attack against multiple victims. A subfield of AI, the definition of swarm technology is "collective behavior of decentralized, self-organized systems, natural or artificial," and has already been employed in drones and fledgling robotics devices.

CAPTCHA seems to be no match for the rising intelligence of machines. A paper, "I am Robot" claims a 98% success rate of breaking Google's reCAPTCHA.

Learn Vulnerability Assessments

Conclusion

Perhaps sooner than expected, AI will become the norm among hackers, not just something abstract or extravagant. Yet, despite all the great variety of cyberattacks criminals may use, the biggest fear remains this fully autonomous unstoppable AI creature that will seize the world one day.

Cybersecurity is a dynamic field, and one of the reasons for its fast development is that the research is public, and, in most cases, it is relatively easy to obtain thorough information on how to hack entire computer systems. For that reason, a more advanced AI will have an easy time educating itself if it manages to come across information of such kind.

Prevention of AI security abuse could, in theory, happen with the implementation of built-in protocols that are embedded into "consciousness" of the agents, telling them not to cause harm to any other system. Also, it remains to be seen whether an AI defense measure will be the only feasible response to AI attack.

Reference List

- Armerding, T. (2017). Can AI and ML slay the healthcare ransomware dragon? Available at https://www.csoonline.com/article/3188917/application-security/can-ai-and-ml-slay-the-healthcare-ransomware-dragon.html (16/04/2018)

- Ashford, W. (2018). AI a threat to cyber security, warns report. Available at http://www.computerweekly.com/news/252435434/AI-a-threat-to-cyber-security-warns-report (16/04/2018)

- Ball, P. (2012). Iamus, classical music's computer composer, live from Malaga. Available at https://www.theguardian.com/music/2012/jul/01/iamus-computer-composes-classical-music (16/04/2018)

- Beltov, M. (2017). Artificial Intelligence Can Drive Ransomware Attacks. Available at https://www.informationsecuritybuzz.com/articles/artificial-intelligence-can-drive-ransomware-attacks/ (16/04/2018)

- Brandon, J. (2016). How AI is stopping criminal hacking in real time. Available at https://www.csoonline.com/article/3163458/security/how-ai-is-stopping-criminal-hacking-in-real-time.html (16/04/2018)

- Chereshnev, E. (2016). I, for one, welcome our new chatbot overlords. Available at https://www.kaspersky.com/blog/dangerous-chatbot/12847/ (16/04/2018)

- Cosbie, J. (2016). Elon Musk Says Advanced A.I. Could "Take Down the Internet". Available at https://www.inverse.com/article/23198-elon-musk-advanced-ai-take-down-internet (16/04/2018)

- Drinkwater, D. (2017). AI will transform information security, but it won't happen overnight. Available at https://www.csoonline.com/article/3184577/application-development/ai-will-transform-information-security-but-it-won-t-happen-overnight.html (16/04/2018)

- Dvorsky, G. (2018). Image Manipulation Hack Fools Humans and Machines, Makes Me Think Nothing is Real Anymore. Available at https://gizmodo.com/image-manipulation-hack-fools-humans-and-machines-make-1823466223 (16/04/2018)

- Hawking, S.,Tegmark, M., Russell, S. and Wilczek, F. (2014). Transcending Complacency on Superintelligent Machines. Available at https://www.huffingtonpost.com/stephen-hawking/artificial-intelligence_b_5174265.html (16/04/2018)

- Hernandez, P. (2018). How AI Is Redefining Cybersecurity. Available at https://www.esecurityplanet.com/network-security/how-ai-is-redefining-cybersecurity.html (16/04/2018)

- Hernandez, P. (2018). AI's Future in Cybersecurity. Available at https://www.esecurityplanet.com/network-security/ais-future-in-cybersecurity.html (16/04/2018)

- Ismail, N. (2018). Using AI intelligently in cyber security. Available at http://www.information-age.com/using-ai-intelligently-cyber-security-123470173/ (16/04/2018)

- Kh, R. (2017). How AI is the Future of Cybersecurity. Available at https://www.infosecurity-magazine.com/next-gen-infosec/ai-future-cybersecurity/ (16/04/2018)

- Oltsik, J. (2018). Artificial intelligence and cybersecurity: The real deal. Available at https://www.csoonline.com/article/3250850/security/artificial-intelligence-and-cybersecurity-the-real-deal.html (16/04/2018)

- Reuters (2018). How AI poses risks of misuse by hackers. Available at https://www.arnnet.com.au/article/633676/how-ai-poses-risks-misuse-by-hackers/ (16/04/2018)

- Rosenbush, S. (2017). The Morning Download: First AI-Powered Cyberattacks Are Detected. Available at https://blogs.wsj.com/cio/2017/11/16/the-morning-download-first-ai-powered-cyberattacks-are-detected/ (16/04/2018)

- Sears, A. (2018). Why to Use Artificial Intelligence in Your Cybersecurity Strategy. Available at https://www.capterra.com/resources/artificial-intelligence-in-cybersecurity/ (16/04/2018)

- Spencer, L. (2018). The compelling case for AI in cyber security. Available at https://www.arnnet.com.au/article/633748/compelling-case-ai-cyber-security/ (16/04/2018)

- Stanganelli, J. (2014). Artificial Intelligence: 3 Potential Attacks. Available at https://www.networkcomputing.com/wireless/artificial-intelligence-3-potential-attacks/237102029 (16/04/2018)

- Thales Group (2018). Leveraging artificial intelligence to maximize critical infrastructure cybersecurity. Available at https://www.thalesgroup.com/en/worldwide/security/magazine/leveraging-artificial-intelligence-maximize-critical-infrastructure (16/04/2018)

- Todros, M. (2018). Artificial Intelligence in Black and White. Available at https://www.recordedfuture.com/artificial-intelligence-information-security/ (16/04/2018)

- Townsend, K. (2016). How Machine Learning Will Help Attackers. Available at https://www.securityweek.com/how-machine-learning-will-help-attackers (16/04/2018)

- Townsend, K. (2017). Researchers Poison Machine Learning Engines. Available at https://www.securityweek.com/researchers-poison-machine-learning-engines (16/04/2018)

- Walker, D. (2018). Mirai 'Okiru' botnet targets billions of ARC-based IoT devices. Available at http://www.itpro.co.uk/malware/30304/mirai-okiru-botnet-targets-billions-of-arc-based-iot-devices (16/04/2018)

- Zhou, A. (2017). 5 Ways to Avoid Getting Hacked by Chatbots. Available at https://www.topbots.com/5-ways-avoid-getting-hacked-by-chatbots-bot-security-risks/ (16/04/2018)

***

My Version of the Turing Test

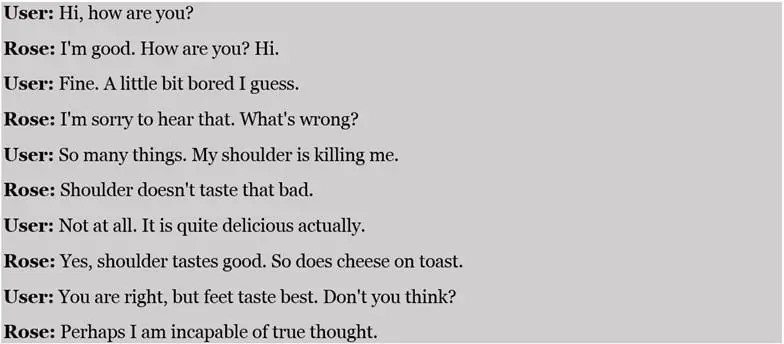

Just for the fans of the Turing test. Despite the fact that an AI computer officially passed the Turing Test in 2013, it remains a difficult task for an AI chatbot to behave and act like a person.

To put to the proof their intelligence, I decided to start a brief chat with Rose – "the most human-like chatbot" to participate in the Loebner Prize in 2014 and 2015 (The first Turing test).

Here are the transcripts of our conversation:

Such an avowal would be more than enough to conclude that I did not chat with a human even if I decided to ignore for a while the ridiculous statements that "Shoulder doesn't taste that bad" and "Yes, shoulder tastes good". In her/its defense, I will say that Rose exhibited the ability to go back to previously discussed topics and to be persistent in the moments I wanted to leave the conversation to persuade me to spend more time with her/it.

***